A Semantic Layer for:

Agentic AI & Natural Language Query

Trusted, Consistent Metrics

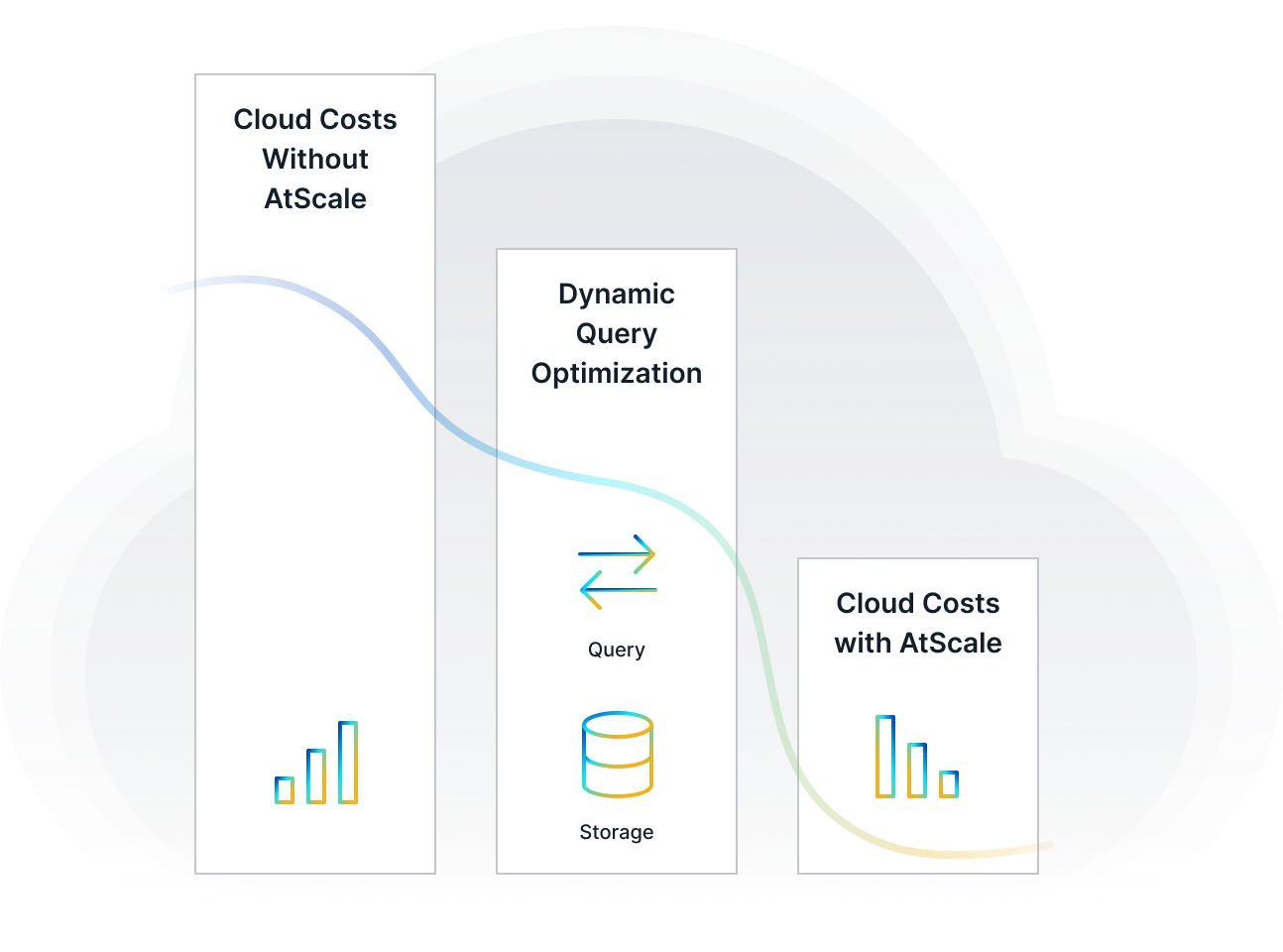

Optimized Cloud Costs & Performance

The leading platform for governed, AI-ready metrics at scale

Connect any data source to any AI/BI tool without moving your data

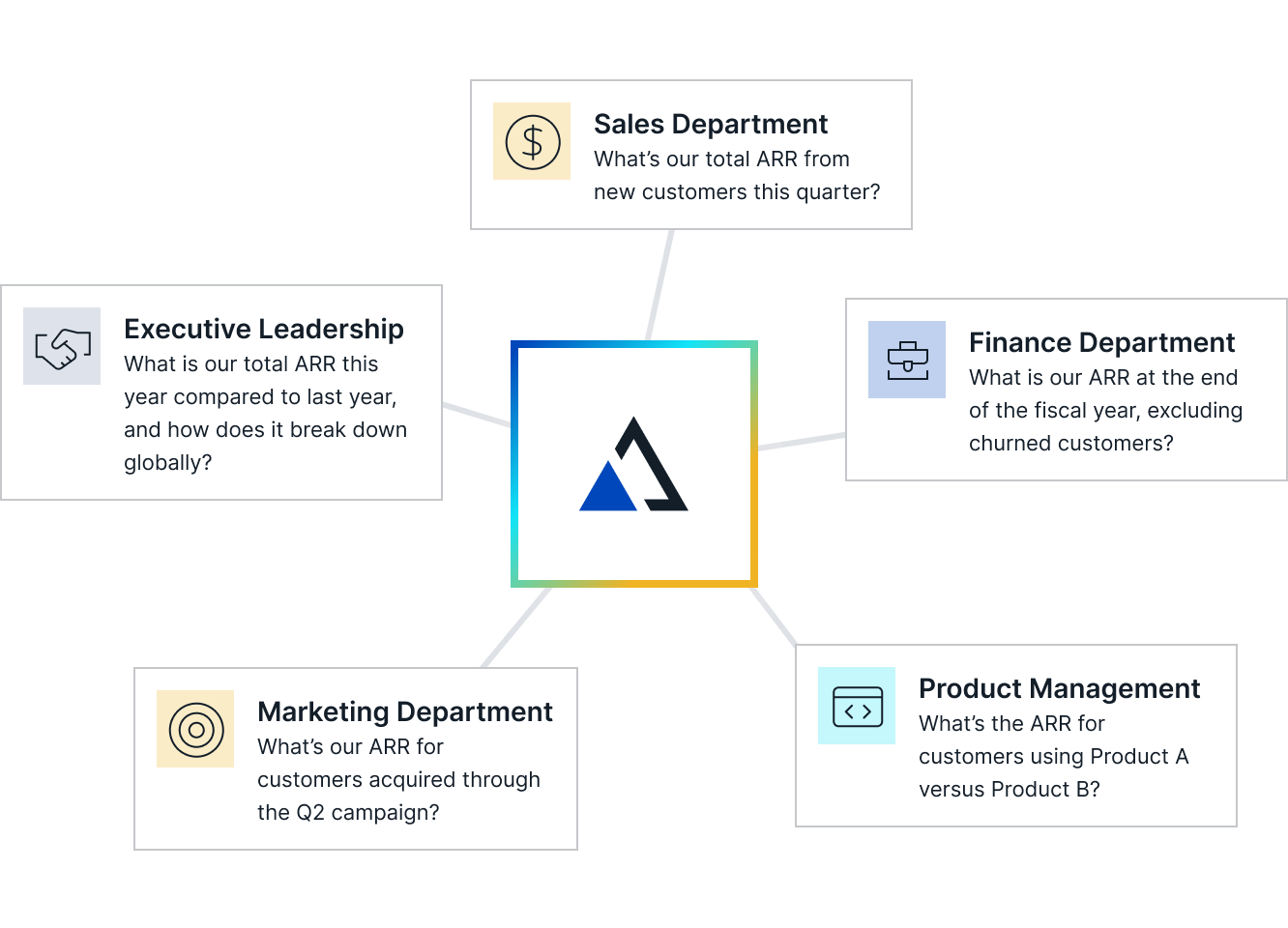

A semantic layer maps complex data to familiar business terms, like product, revenue, or customer, to create a unified, governed view of data across your organization. It empowers users and AI agents to access accurate insights autonomously.

Redshift

Databricks

Google Big Query

Data Warehouse/ Lakehouse

Snowflake

Azure Synapse

Cloudera

Semantic Layer

Data Democratization & Governance

Cloud Cost Management & Performance

Enabling Gen AI with Business Context

Gen AI / LLM

BI Tools

AI Agents / Chatbots

Applications

BI Tools

AI Agents / Chatbots

Applications

Modern Analytics for Every Role and Data Stack

Business Intelligence

Enable analysts to build trusted metrics for BI, dashboards, and AI, no duplication required.

Analytical Applications

Deliver embedded analytics with governed metrics and blazing-fast query performance.

Learn More

Data Modeling

Build semantic models with AI using a unified code-first and no-code modeling for engineers and analysts alike.

Learn More

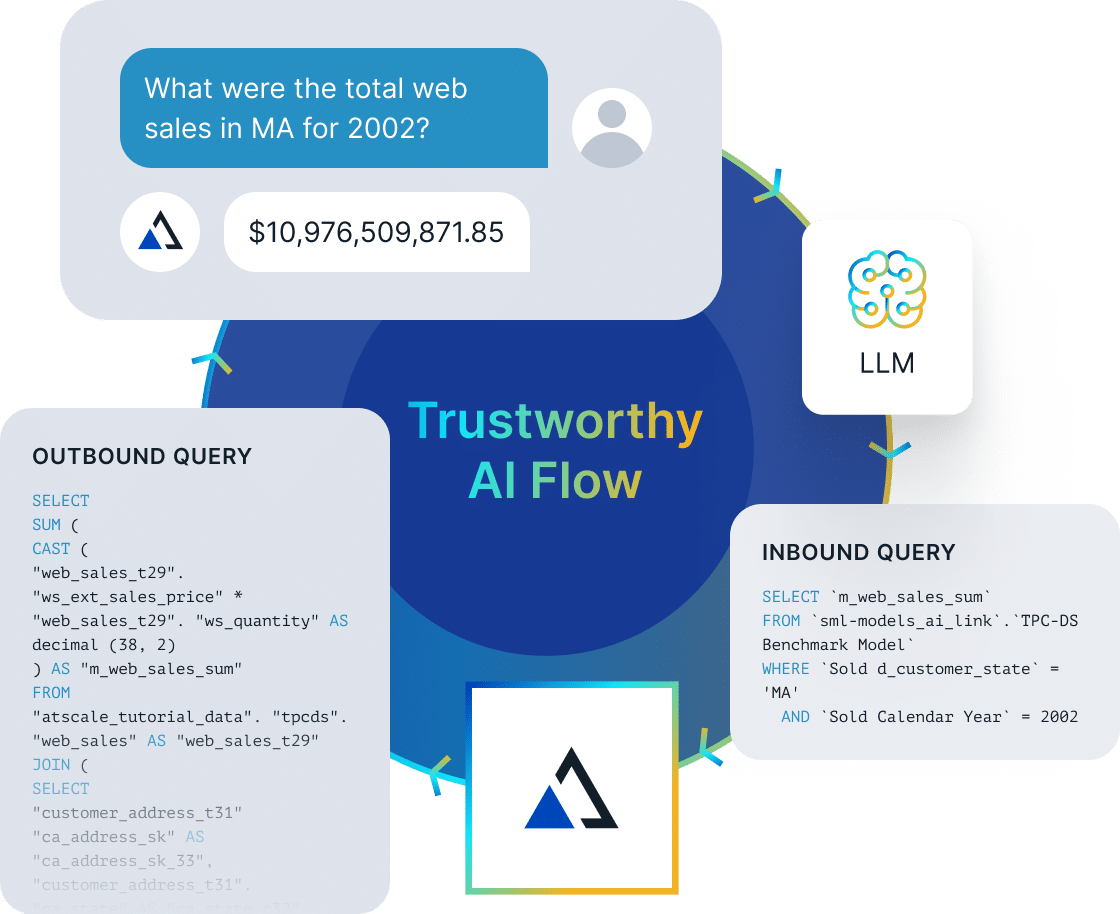

Agentic AI & Natural Language Query

Feed LLMs and agents with governed business context, no wrangling, no hallucinations.

Learn More

See AtScale In Action

The Universal Semantic Layer for Enterprises

Empower teams with secure, real-time data access, governed semantic modeling, and universal compatibility across tools and protocols.

Agentic AI Ready

Power agents that act, not just answer. With AtScale, query, compute, and reason over trusted business data.

Learn More

Open-Source Semantics

Powered by open-source Semantic Modeling Language (SML) for portable, governed models.

Learn More

Live Cloud Connectivity

Connect live to leading cloud data platforms without moving data. Agents get secure, real-time access.

Learn More

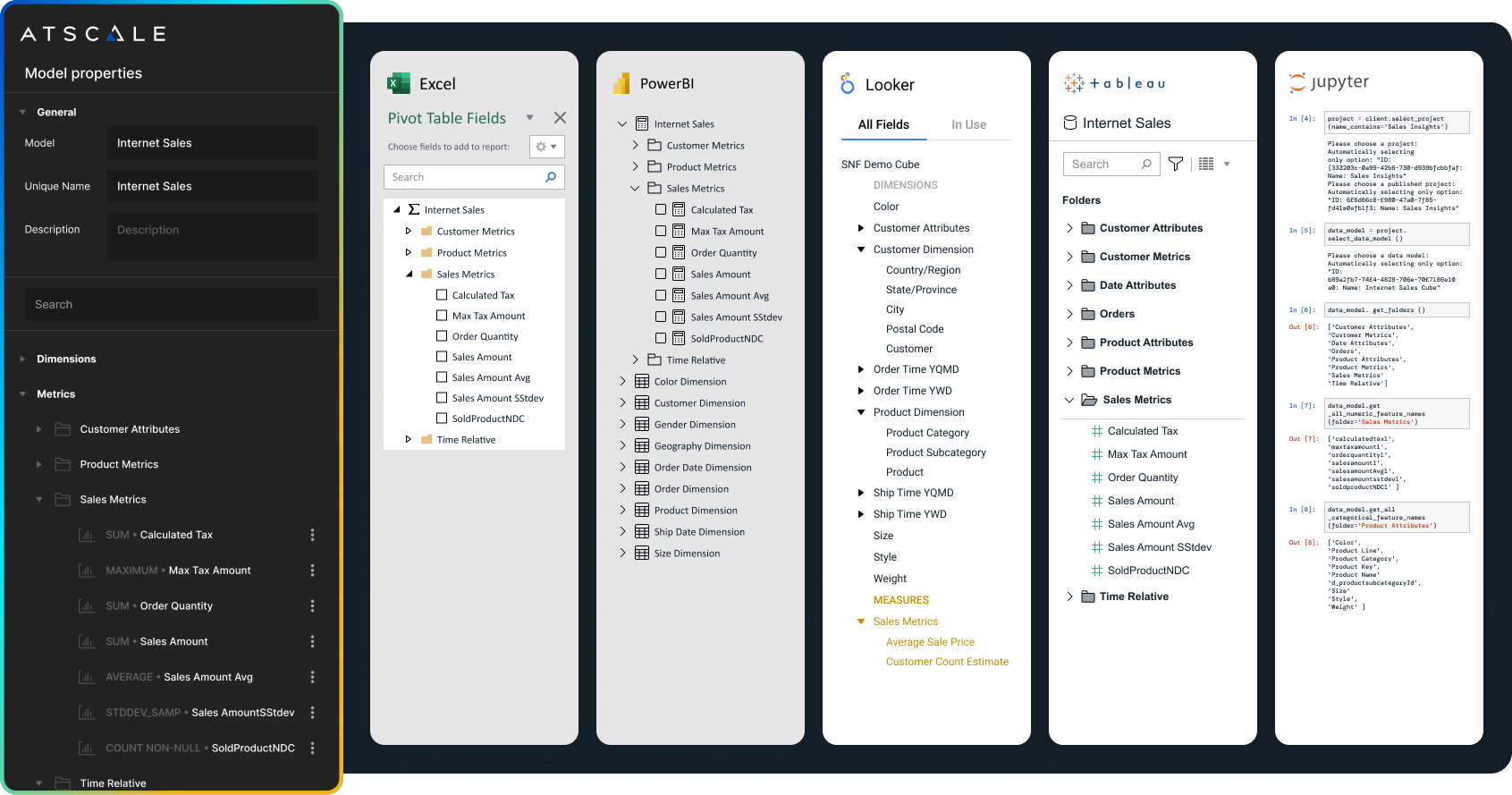

Universal BI Integration

Your business logic works across leading BI tools with sub-second query performance and consistent results.

Learn More

Governed Semantics for Agents & Humans

Define once. Use everywhere. AtScale makes data models reusable and governed for people and agents.

Learn More

Multi-Dimensional Business Logic

Model complex metrics, time logic, and drilldowns with a cloud-native semantic layer built for AI agents and BI tools.

Learn More

Unify Your Data Ecosystem

Multi-protocol deep integrations that support SQL, MDX, DAX, Python, and REST protocols, integrating seamlessly with various BI tools or visualization platforms. Learn More -->